GPT Realtime 2 API - Why Real-Time AI Could Become the Most Important Shift Since the Rise of ChatGPT

When ChatGPT first exploded into the mainstream, the AI industry entered a race centered almost entirely around intelligence.

Every few months, a new model appeared claiming better reasoning, stronger coding abilities, larger context windows, faster inference, or higher benchmark scores. The conversation became deeply focused on model capability itself. Which system could solve harder problems? Which one performed better on standardized tests? Which model could generate more human-like outputs?

For a while, that made sense. The jump in intelligence between generations of models was dramatic enough that raw capability became the defining metric of progress.

But over the last year, something much more subtle has started happening inside the developer ecosystem.

The conversation has slowly begun shifting away from: “Which model is smartest?” towards : “Which model actually feels natural to interact with?”

That change may sound small, but it fundamentally changes how AI systems are built, evaluated, and deployed.

And this is exactly why the GPT-Realtime-2 API is generating so much attention right now.

Because for the first time, a growing number of developers are beginning to realize that the next major leap in AI may not come purely from intelligence gains. It may come from reducing the friction between humans and AI systems to the point where the interaction itself starts feeling seamless.

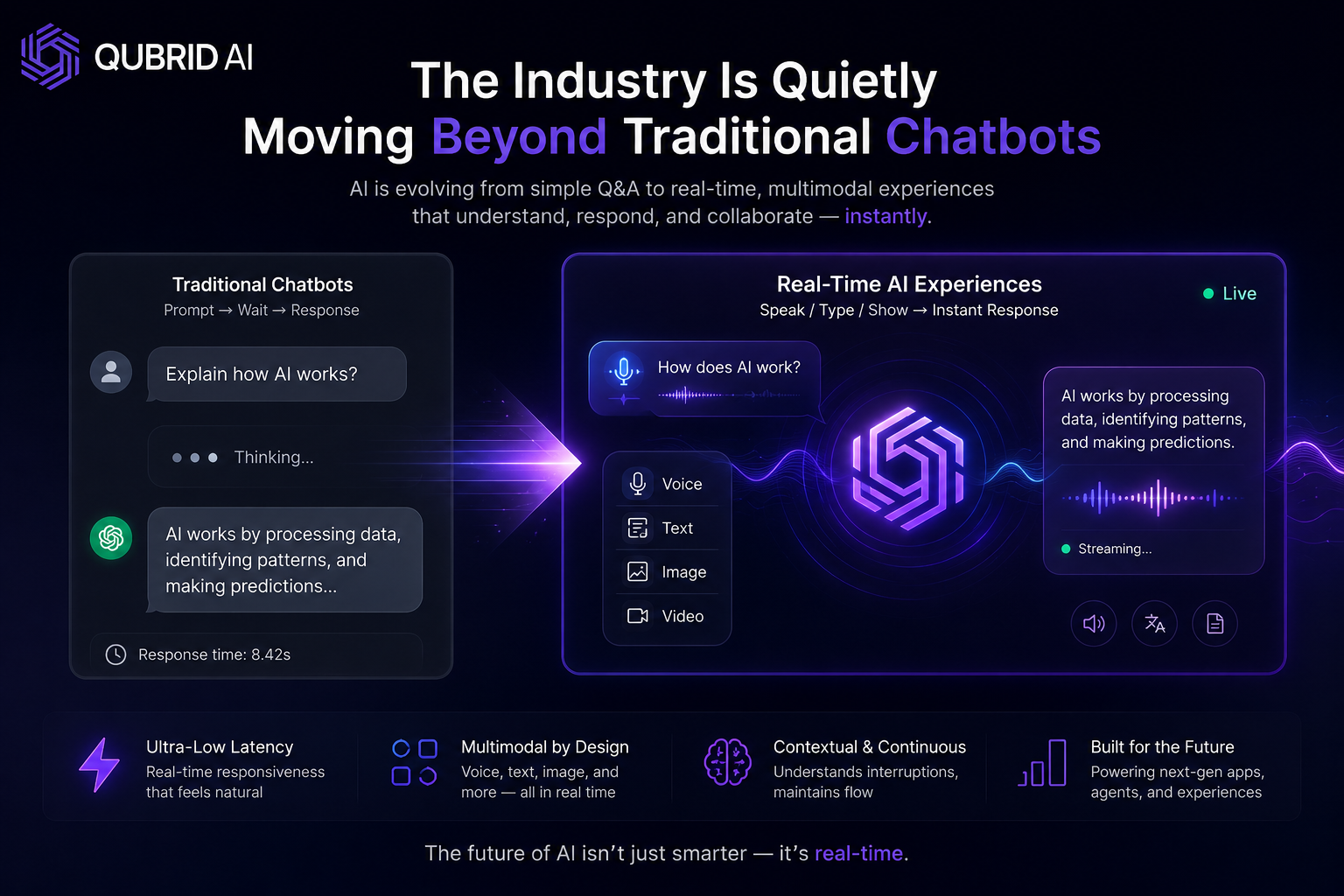

The Industry Is Quietly Moving Beyond Traditional Chatbots

Most first-generation AI products followed a very predictable interaction pattern.

A user typed something. The model processed the request. Several seconds passed. A response appeared. Even as models became dramatically smarter underneath, the actual experience remained surprisingly static.

That model of interaction worked well enough for chatbots, writing assistants, and coding copilots. But the moment AI started moving into voice interfaces, live collaboration systems, and conversational assistants, the weaknesses became obvious.

Human conversations don’t operate in delayed prompt-response cycles.

People interrupt each other. They respond instantly. They change direction mid-sentence. They expect fluid conversational rhythm. And the closer AI systems move toward real-time interaction, the more unnatural traditional LLM behavior begins to feel.

This is why searches related to:

real-time AI APIs

voice AI infrastructure

low-latency multimodal systems

speech-to-speech AI

streaming conversational AI

have started growing rapidly across the developer ecosystem.

The demand is no longer just for “better answers.” It’s for AI systems that can participate in interaction naturally.

That distinction is becoming incredibly important.

Why GPT-Realtime-2 Feels Different From Earlier AI APIs

One reason developers are paying close attention to GPT-Realtime-2 is because it represents a shift in what AI infrastructure is optimizing for.

Traditional language models were largely optimized around output quality and benchmark performance. Real-time multimodal systems introduce a completely different set of constraints.

Now the system needs to:

stream responses continuously

maintain conversational state

synchronize audio and text simultaneously

minimize latency aggressively

process interruptions naturally

respond quickly enough to preserve conversational flow

This changes the engineering challenge entirely.

Suddenly, token generation speed matters more than ever. Streaming pipelines become critical. GPU scheduling efficiency starts impacting user experience directly. Infrastructure architecture becomes inseparable from conversational quality.

That’s why many developers believe real-time AI represents the next major platform transition in artificial intelligence—not because the underlying models suddenly became infinitely smarter, but because the interaction layer itself is evolving.

The Developer Community Is Both Excited and Skeptical

If you spend time reading discussions across Reddit, Hacker News, X, Discord communities, and open-source AI forums, the reaction to GPT-Realtime-2 is surprisingly nuanced.

There’s genuine excitement around how fluid these interactions feel compared to traditional chatbot systems. Developers experimenting with real-time voice applications quickly realize how transformative low-latency interaction can be. Even relatively small reductions in delay dramatically improve perceived intelligence and conversational quality.

But there’s also skepticism.

A large portion of the AI community remains deeply interested in open infrastructure, local inference, and model portability. Many developers don’t want the future of AI to become entirely dependent on centralized APIs controlled by a small number of companies.

This tension is especially visible inside the open-source ecosystem.

The open-source AI movement has made incredible progress over the past year in areas like:

reasoning models

coding models

quantization

fine-tuning

local inference optimization

But real-time multimodal interaction remains one of the hardest challenges in AI infrastructure.

Building systems that feel conversationally natural requires far more than simply generating accurate text. It requires optimized streaming pipelines, interruption handling, audio synchronization, low-latency inference, efficient concurrency management, and highly tuned infrastructure orchestration.

That’s one reason why searches for:

GPT-Realtime-2 alternatives

open source voice AI

local multimodal AI

real-time LLM frameworks

have started increasing rapidly.

Developers want the responsiveness of frontier systems while maintaining the flexibility and openness of community-driven ecosystems. Right now, that balance remains difficult to achieve.

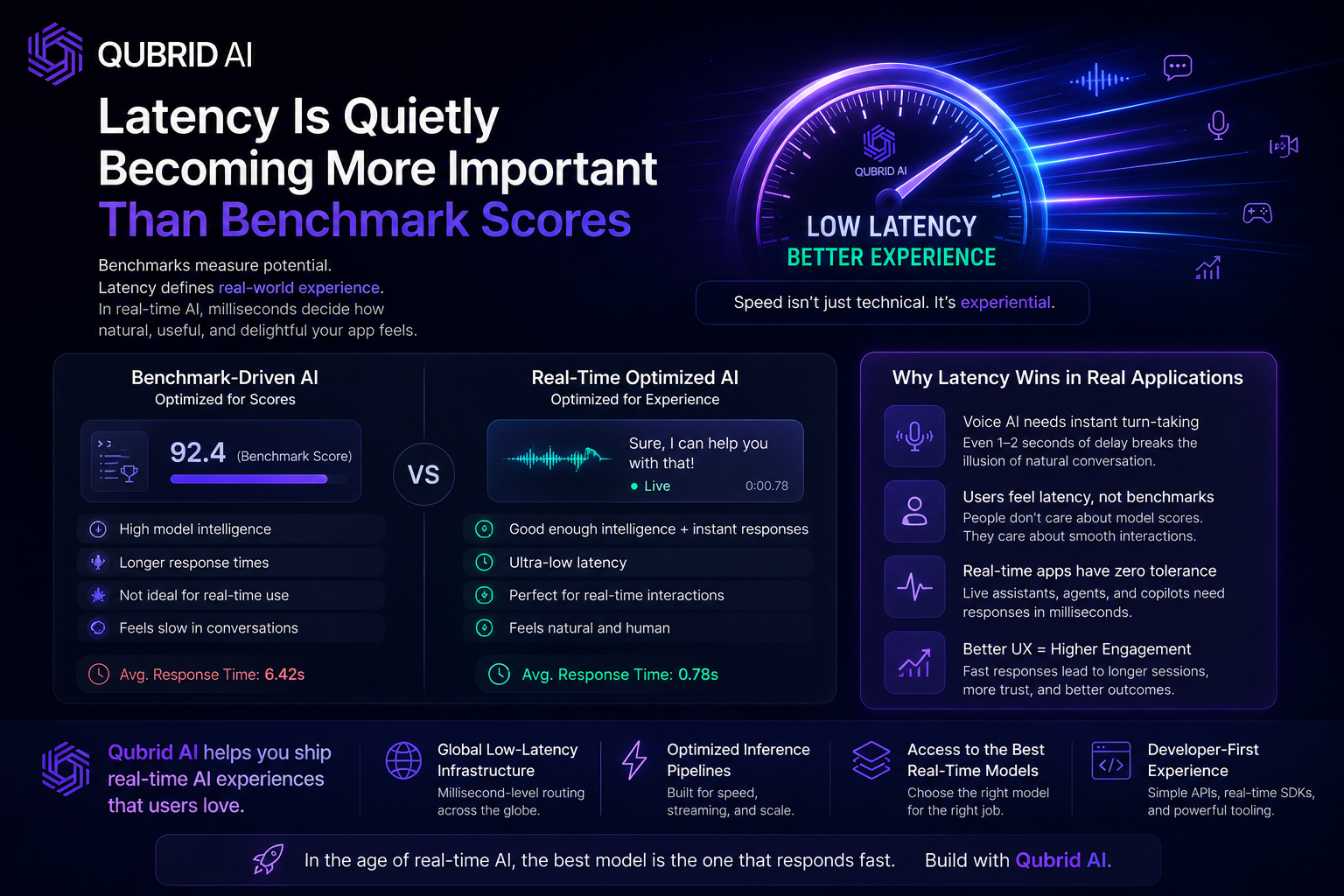

Latency Is Quietly Becoming More Important Than Benchmark Scores

One of the most interesting shifts happening in AI right now is that latency is beginning to matter as much as intelligence itself.

For years, the AI industry conditioned people to think primarily in terms of benchmark scores. Higher reasoning scores meant better models. Better coding performance meant stronger systems.

But real-world interaction is more complicated than benchmark evaluations.

Users don’t directly experience benchmark numbers.

They experience:

pauses

interruptions

conversational rhythm

response delays

interaction smoothness

And in many cases, a model that responds naturally and instantly can feel dramatically more impressive than a technically smarter system with noticeable latency.

This becomes especially important for:

voice assistants

AI customer support systems

conversational agents

AI meeting assistants

live collaboration tools

interactive copilots

Because once interaction becomes real time, every delay becomes visible.

That’s why real-time AI infrastructure is suddenly becoming one of the most strategically important layers in the entire AI ecosystem.

Why This Matters for Startups and AI Companies

The rise of systems like GPT-Realtime-2 is also changing how startups think about product design.

A year ago, many AI companies built products around static prompt-response workflows. But increasingly, startups are beginning to design products around continuous interaction instead.

This shift opens the door to entirely new categories of software:

AI phone agents

conversational operating systems

real-time AI tutors

live AI collaboration platforms

persistent AI coworkers

multimodal productivity tools

And importantly, these products require very different infrastructure assumptions compared to traditional SaaS applications.

Responsiveness becomes product-defining.

Latency becomes part of UX design.

Streaming becomes mandatory rather than optional.

This is one reason why infrastructure providers, GPU platforms, inference companies, and AI deployment startups are paying such close attention to real-time AI workloads right now.

The Open-Source Question Isn’t Going Away

One of the biggest debates surrounding systems like GPT-Realtime-2 is whether real-time multimodal AI will eventually become more open or remain dominated by centralized providers.

Right now, frontier real-time systems still hold a noticeable lead in conversational quality and responsiveness. But the open-source ecosystem moves extremely quickly, especially once enough developers begin focusing on a specific problem category.

The same thing happened with coding models, reasoning models, and local inference optimization.

Many developers believe real-time multimodal AI will eventually follow a similar trajectory.

Until then, however, most teams will likely continue experimenting with hybrid approaches:

combining hosted APIs with local models

routing workloads dynamically

balancing latency against cost

mixing open and proprietary systems depending on use case

And that hybrid future may ultimately define the next phase of AI infrastructure more than any single model release.

Why GPT-Realtime-2 Matters Beyond Voice AI

The most important thing to understand about GPT-Realtime-2 is that it’s not really just about voice.

Voice is simply the first visible layer of a much larger transition.

What’s actually happening is that AI systems are beginning to evolve from isolated tools into persistent interaction layers embedded directly into software environments, workflows, operating systems, and communication platforms.

That changes the role AI plays entirely.

Instead of occasionally asking a chatbot for help, users increasingly expect AI systems to:

collaborate continuously

operate contextually

respond instantly

remain aware of ongoing interaction

And that evolution could reshape software interfaces over the next decade in ways that feel comparable to the transition from desktop computing to mobile platforms.

Final Thoughts

The growing interest around the GPT-Realtime-2 API reflects something much larger than another AI model release.

It reflects a growing realization across the industry that intelligence alone is no longer enough.

The next generation of AI systems will also need to feel:

responsive

conversational

contextually aware

multimodal

low-latency

continuously interactive

And achieving that requires solving infrastructure, deployment, streaming, and interaction challenges that go far beyond traditional benchmark optimization.

That’s why developers, startups, infrastructure providers, and even the open-source community are watching this category so closely.

Because the next major battleground in AI may not be who builds the smartest model.

It may be who builds the first system that truly feels seamless to interact with in real time.