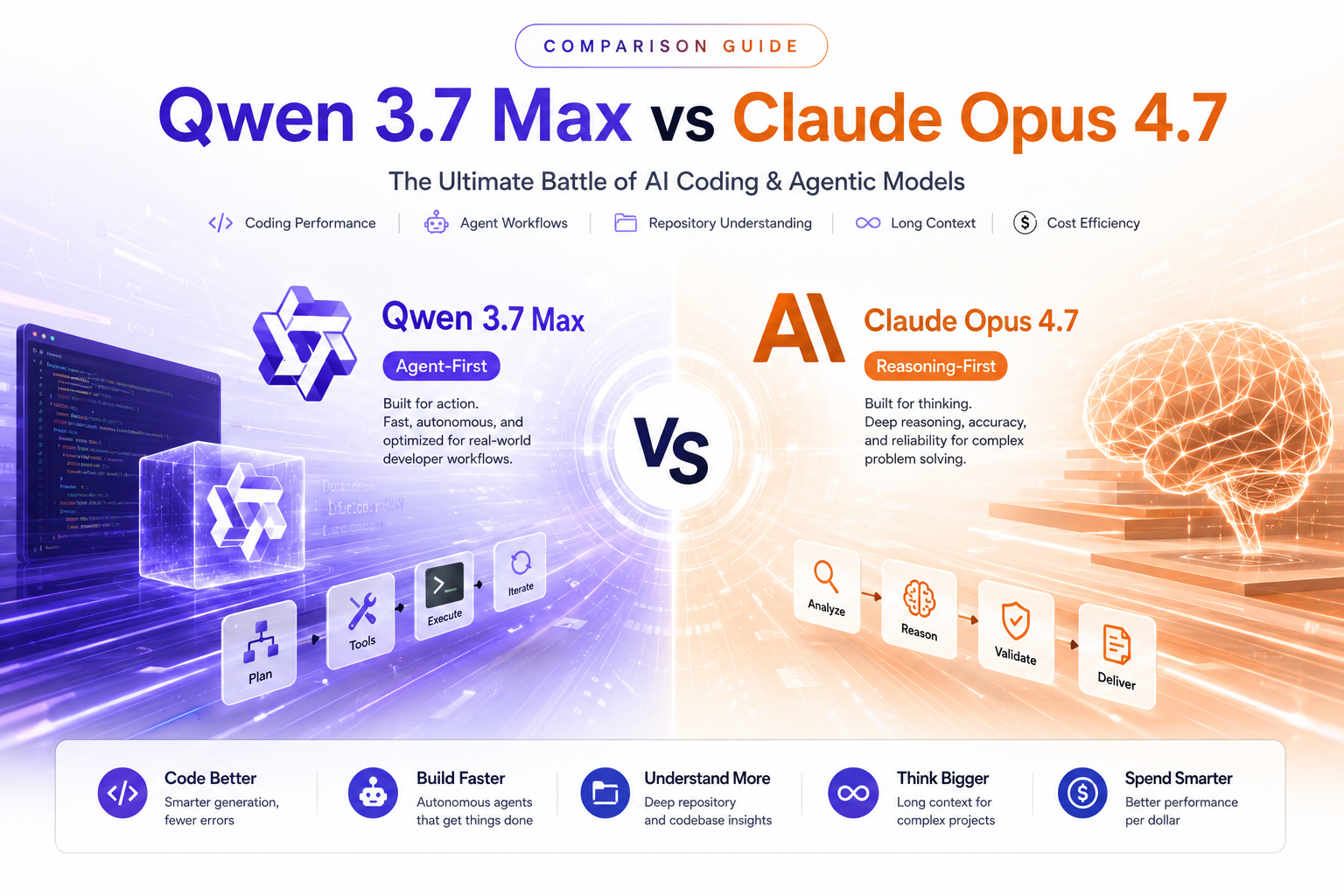

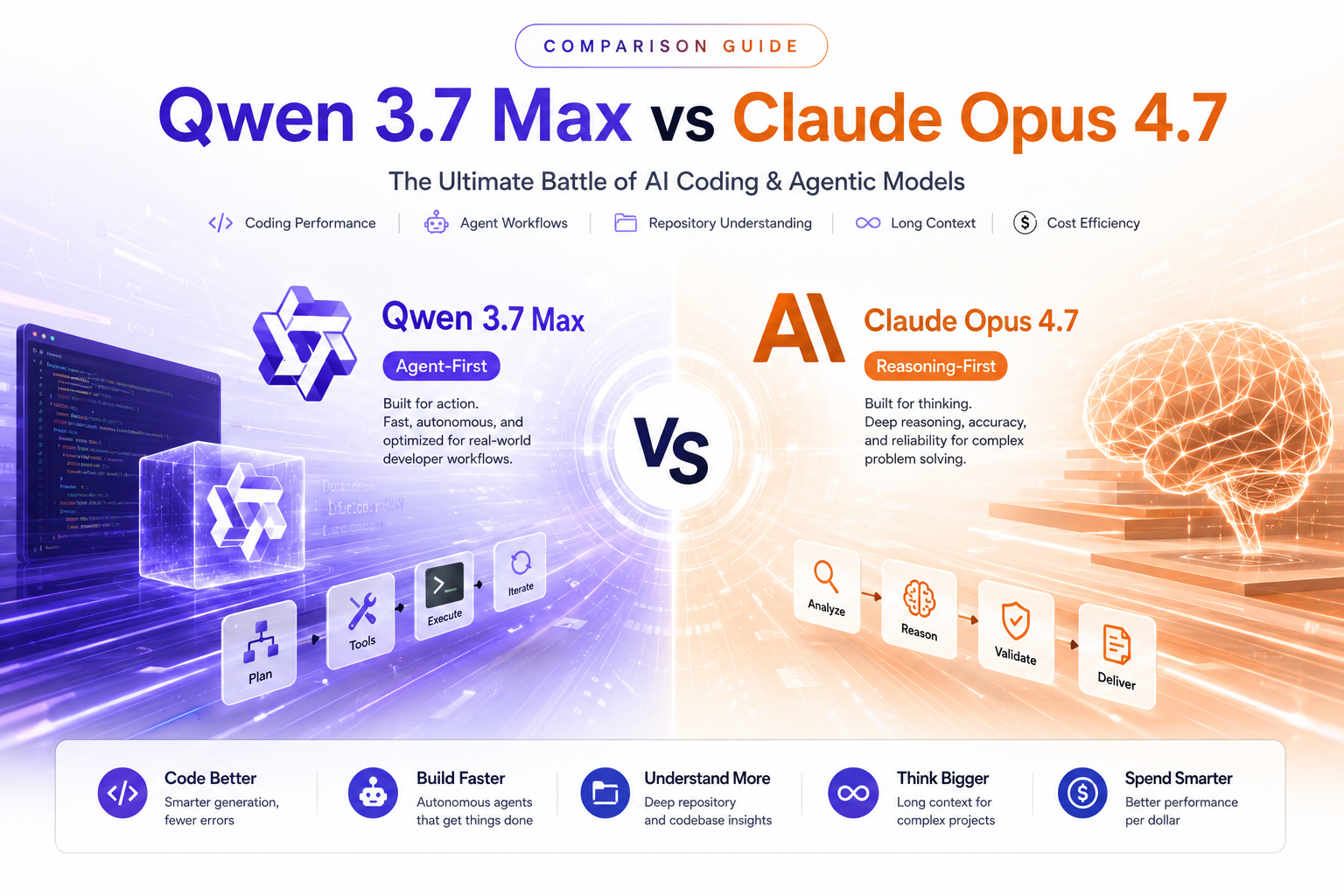

Qwen 3.7 Max vs Claude Opus 4.7: Can Alibaba Finally Challenge Anthropic's Coding King?

Comparing Qwen 3.7 Max and Claude Opus 4.7 across software engineering, AI agents, long-context reasoning, benchmark performance, and API costs.

Stay updated with the latest news and insights from Qubrid AI.

Comparing Qwen 3.7 Max and Claude Opus 4.7 across software engineering, AI agents, long-context reasoning, benchmark performance, and API costs.

One of the strongest frontier models for coding agents, MCP workflows, and long-horizon AI execution is now available on Qubrid AI.

As open-weight models, inference optimization, and GPU infrastructure evolve rapidly, organizations are beginning to rethink where AI workloads should actually run. This deep technical analysis explores the real economics, performance tradeoffs, latency considerations, and architectural shifts driving the rise of hybrid AI systems across local, cloud, and on-prem deployments.

From low-latency voice assistants and streaming multimodal systems to the future of conversational infrastructure, here’s why GPT-Realtime-2 is becoming one of the most discussed topics among developers, startups, and the broader AI community

If you've been following the open-source LLM space over the past few months, you already know that Moonshot AI has been one of the more interesting players to watch. Their latest release, Kimi K2.6, is generating real attention among developers, and not just because of the benchmark numbers.

Official announcements from Qubrid AI

Qubrid AI, a leading Open, Inference-First Full-Stack AI Platform company, today at NVIDIA GTC 2026 announced the addition and acceleration of over forty open-source models powered by NVIDIA AI infrastructure. Enterprise agent developers can simply integrate a single API provided by Qubrid and inference over forty models from within their agentic application, decide which model suits their requirements and then scale using NVIDIA GPU VMs or dedicated GPU servers all running on Qubrid's advanced AI platform.

DeepSeek V4 Pro API Explained in Depth: Intelligence Scores, Token Usage, Latency, Pricing, and How to Optimize It for Production

Shubham Tribedi

NVIDIA dropped two very different open models in 2026. One is a heavyweight reasoning engine designed for large-scale multi-agent pipelines and complex agentic workflows. The other is a lean, omni-modal perception model that sees, hears, reads, and reasons all on a single GPU. Same NVIDIA Nemotron DNA. Radically different use cases.

QubridAI

Kimi K2.6 is Moonshot AI's latest open-source model built for long-horizon coding, multimodal input, and agent swarm workflows. And the easiest way to access it via API right now is through Qubrid AI, which gives you instant serverless access without touching any GPU infrastructure.

QubridAI

You're building something that matters. Maybe it's an autonomous coding agent, a document-heavy RAG pipeline, or a multi-step workflow that needs to think before it acts. You've heard the buzz around Alibaba's Qwen3.6 family two models, same lineage, very different personalities. Here's the uncomfortable truth: picking the wrong one won't just cost you benchmark points. It'll cost you latency, money, and in some cases, the quality ceiling your product actually needs.

QubridAI

Most open-source AI releases ask you to make a trade-off: raw power or practical speed. DeepSeek's V4 series refuses that bargain. With two models one built for scale, one built for velocity and a shared architecture that supports a full **one million token context window**, the DeepSeek-V4 series is one of the most thoughtfully designed open-weight releases to date. Whether you're building latency-sensitive applications or tackling complex agentic workflows, there's a V4 model designed for exactly what you need.

QubridAI

Most AI pipelines are a mess of duct tape. You have one model handling vision, another transcribing audio, and yet another stitching it all together, each hop adding latency, complexity, and cost. If you've built anything resembling an agentic system lately, you've felt this pain firsthand.

QubridAI

If you’ve been waiting for a model that doesn’t make you choose between speed and intelligence, DeepSeek V4 Flash might be exactly what you’ve been looking for. Built on the same architectural lineage as DeepSeek V3 and the newly released DeepSeek V4 Pro, V4 Flash is optimized for developers who need rapid, reliable responses without sacrificing reasoning depth. It’s lean, it’s quick, and it’s now available on Qubrid AI.

QubridAI

The open-source leaderboard just got reshuffled again. DeepSeek-V4-Pro, the latest flagship from DeepSeek AI, has arrived with a claim that's hard to ignore: 1.6 trillion parameters, a 1 million token context window, and benchmark numbers that rival the best closed-source models on the planet. For developers who care about what's actually happening at the frontier of open-weight AI, this one deserves a close look.

QubridAI

A 27-billion parameter model that beats 400B-class systems on coding benchmarks shouldn't exist. Qwen3.6-27B does. Alibaba's Qwen team just released the first open-weight model from the Qwen3.6 series, and it's turning heads for one reason: a compact dense model is now outperforming much larger Mixture-of-Experts systems on the benchmarks that developers actually care about real-world software engineering, agentic coding, and frontier-level reasoning. No MoE routing overhead, no inflated parameter budgets. Just 27B dense parameters, a rethought hybrid architecture, and a 262K token native context window.

QubridAI

What if your AI agent could spend 13 hours autonomously rewriting the core of a financial matching engine, making 1,000+ tool calls, analyzing CPU flame graphs, and delivering a 185% throughput improvement without a single human intervention?

QubridAI

Large language models are moving fast. But every so often, a release lands that feels genuinely different not just an incremental tuning run, but a step up in what's actually possible. Qwen3.6-Max-Preview, released by Alibaba on April 20, 2026, is one of those releases.

QubridAI

The landscape of Anthropic's model lineup shifted meaningfully twice in early 2026. First, Claude Sonnet 4.6 launched in February 2026 as the first Sonnet to surpass the prior generation's Opus on coding, redefining what a mid-tier model could do. Then, Claude Opus 4.7 arrived in April 2026 as a notable improvement on Opus 4.6 in advanced software engineering, with particular gains on the most difficult tasks.

Shubham Tribedi

How Chaitanya Bharathi Institute of Technology scaled advanced clinical image classification using NVIDIA GPUs on Qubrid AI

Have questions? Want to Partner with us? Looking for larger deployments or custom fine-tuning? Let's collaborate on the right setup for your workloads.

"Qubrid helped us turn a collection of AI scripts into structured production workflows. We now have better reliability, visibility, and control over every run."

AI Infrastructure Team

Automation & Orchestration