Kimi K2.6 API on Qubrid AI - Setup, Performance, Pricing, and What You Need to Know Before Going to Production

If you've been following the open-source LLM space over the past few months, you already know that Moonshot AI has been one of the more interesting players to watch. Their latest release, Kimi K2.6, is generating real attention among developers, and not just because of the benchmark numbers.

Try Kimi K2.6 on Qubrid AI here: https://www.qubrid.com/models/kimi-k2.6

Kimi K2.6 isn't simply a model update. It represents a meaningful shift in what open-weight models are now capable of, particularly in long-horizon coding, complex reasoning, and multi-agent orchestration. For developers who've been frustrated by the cost ceiling of proprietary frontier models, K2.6 opens up a genuinely different path, one that doesn't require you to compromise on capability. But accessing the model is one thing. Using it efficiently in production is something else entirely.

Why the Kimi K2.6 API Is Getting Attention

The interest around the Kimi K2.6 API has grown quickly, especially among teams building AI-heavy applications where both output quality and infrastructure costs are real concerns. Compared to proprietary frontier models like GPT and Claude, K2.6 offers a different trade-off with strong reasoning performance combined with open-weight flexibility, and that combination is exactly what many backend and platform teams have been looking for.

For use cases like code generation, long-context document analysis, structured outputs, and agentic workflows, Kimi K2.6 holds up well. Its architecture is a Mixture-of-Experts (MoE) design with 1 trillion total parameters and 32 billion active per token, meaning it can punch well above its weight on reasoning-heavy tasks without the full inference cost of a dense model.

Developers searching for terms like "best open-source LLM API," "Kimi K2.6 integration," or "OpenAI alternative for coding" are often not just experimenting; they're actively looking to reduce inference bills while keeping output quality high. Kimi K2.6 fits directly into that intent.

Still, there are important nuances that only become visible once you move past simple testing.

Architecture and What Makes K2.6 Different

Kimi K2.6 is built on a sparse Mixture-of-Experts transformer with 384 experts, using Multi-head Latent Attention (MLA) for efficient inference. With a 256K token context window and native multimodal support for text, image, and video, it's designed for exactly the kind of long-horizon, complex workloads that most lightweight models struggle with.

It also ships with two distinct operating modes: a standard instant mode for fast, direct responses, and a thinking mode that enables full chain-of-thought reasoning. This flexibility makes it genuinely versatile; you're not forced to choose between speed and depth at the model level. You choose based on the task.

The agent swarm capability supporting up to 300 parallel sub-agents coordinated across 4,000 steps puts it in a category that very few open-source models occupy today.

Benchmark Performance: Where Kimi K2.6 Stands Today

On coding benchmarks, Kimi K2.6 delivers results competitive with leading frontier models. It scores strongly on SWE-Bench Verified, LiveCodeBench, and Aider Polyglot tasks that require sustained multi-step reasoning, not just pattern matching. In agent and tool-use evaluations, it consistently outperforms models of comparable size. But as with any model, benchmarks only tell part of the story.

The MoE architecture means K2.6 is computationally efficient compared to dense models of similar capability, but the thinking mode in particular can generate significantly longer outputs when applied to complex problems. That verbosity is intentional. The model is doing more work on your behalf. But it directly affects both latency and token consumption per request. This is one of the details that matters in production and doesn't show up in a headline benchmark score.

Speed and Latency: The Trade-Off Behind Thinking Mode

Latency is where the operational trade-offs become clearest.

In instant mode, Kimi K2.6 delivers competitive token-per-second throughput suitable for interactive applications, chat interfaces, and copilot-style tools where responsiveness matters. In thinking mode, the model trades speed for depth. Response times increase, but the quality of reasoning on complex tasks improves substantially.

This distinction matters a lot depending on what you're building. For real-time user-facing applications, instant mode is the right default. For backend workflows, evaluation pipelines, or tasks where a slower but higher-quality answer is worth the wait, thinking mode earns its overhead.

Searches like "fastest LLM API" and "low latency AI inference" are popular for a reason and K2.6 doesn't try to pretend it wins on raw throughput. Instead, it gives you the controls to decide when speed matters and when it doesn't.

Pricing and Cost Efficiency: Where It Gets Nuanced

On a per-token basis, Kimi K2.6 is priced competitively, particularly relative to frontier proprietary models. On Qubrid AI, input tokens are billed at $0.89 per million, cached input at $0.18 per million, and output at $3.71 per million. For tasks where K2.6's reasoning capabilities are genuinely needed, that pricing holds up well against alternatives. But the real cost story isn't just the price per token; it's how many tokens you end up using.

In thinking mode, K2.6 generates considerably more output than it would in instant mode. The model reasons through problems explicitly, which means longer completions and higher token counts per request. For tasks that benefit from deep reasoning, this is the right behavior. For simpler workloads, it's unnecessary overhead.

This is where many teams run into unexpected cost scaling. What looks affordable during testing can grow quickly in production if the thinking mode is left on by default across all request types. A more accurate way to think about it: Kimi K2.6 is cost-efficient when used for tasks that actually warrant its reasoning depth. For simpler requests retrieval, summarization, quick Q&A instant mode, or a lighter model entirely is the better call.

That's also why developers are increasingly exploring multi-model routing, where K2.6 is deployed selectively, only where its capabilities justify the cost.

The Real Production Cost: Token Usage in Context

Looking at per-token pricing alone doesn't give you an accurate picture of what K2.6 will actually cost in a production system. The more useful metric is cost per completed task, which factors in how many tokens the model consumes to finish the job.

In thinking mode specifically, K2.6 will generate significantly more tokens per request than it would in instant mode or compared to a lighter model handling the same task. For complex coding or reasoning tasks, the token usage is justified. The output quality is measurably better. But for high-volume workloads where every request goes through thinking mode by default, the cost per task increases substantially.

This has real implications at scale:

Higher cost per user interaction in thinking-mode-heavy workflows

Increased latency per request due to longer outputs

Reduced throughput efficiency under concurrent load

This is why teams evaluating "Kimi K2.6 cost vs alternatives" quickly move past pricing tables and start looking at cost-per-task benchmarks alongside token efficiency metrics.

The takeaway: K2.6 is not an expensive model, but it can become one if it's used without consideration for when thinking mode is actually necessary. The model prioritizes depth, detail, and explicit reasoning. You're paying for the quality of its thought process, not just the final output. For certain use cases, that's exactly what you want. For others, it's overhead.

This is precisely why using K2.6 within a multi-model system rather than as a single dependency becomes so much more practical at scale.

The Hidden Complexity of Using Kimi K2.6 Directly

On paper, integrating the Kimi K2.6 API looks simple. You get access, point your requests at the endpoint, and start getting responses. But as usage grows and workloads diversify, limitations become visible.

Relying on a single model for every request type creates friction you don't anticipate during testing. Some tasks don't need the depth of K2.6's thinking mode. Others need faster turnaround than a reasoning-heavy model can reliably deliver. And managing cost optimization manually across a single-model setup quickly becomes a bottleneck as volume scales.

This is why developers searching for "multi-model AI API" or "unified LLM API" are already thinking one step ahead. They're not just asking how to use Kimi K2.6, they're asking how to use it alongside other models without adding complexity to their stack.

That's where things get interesting.

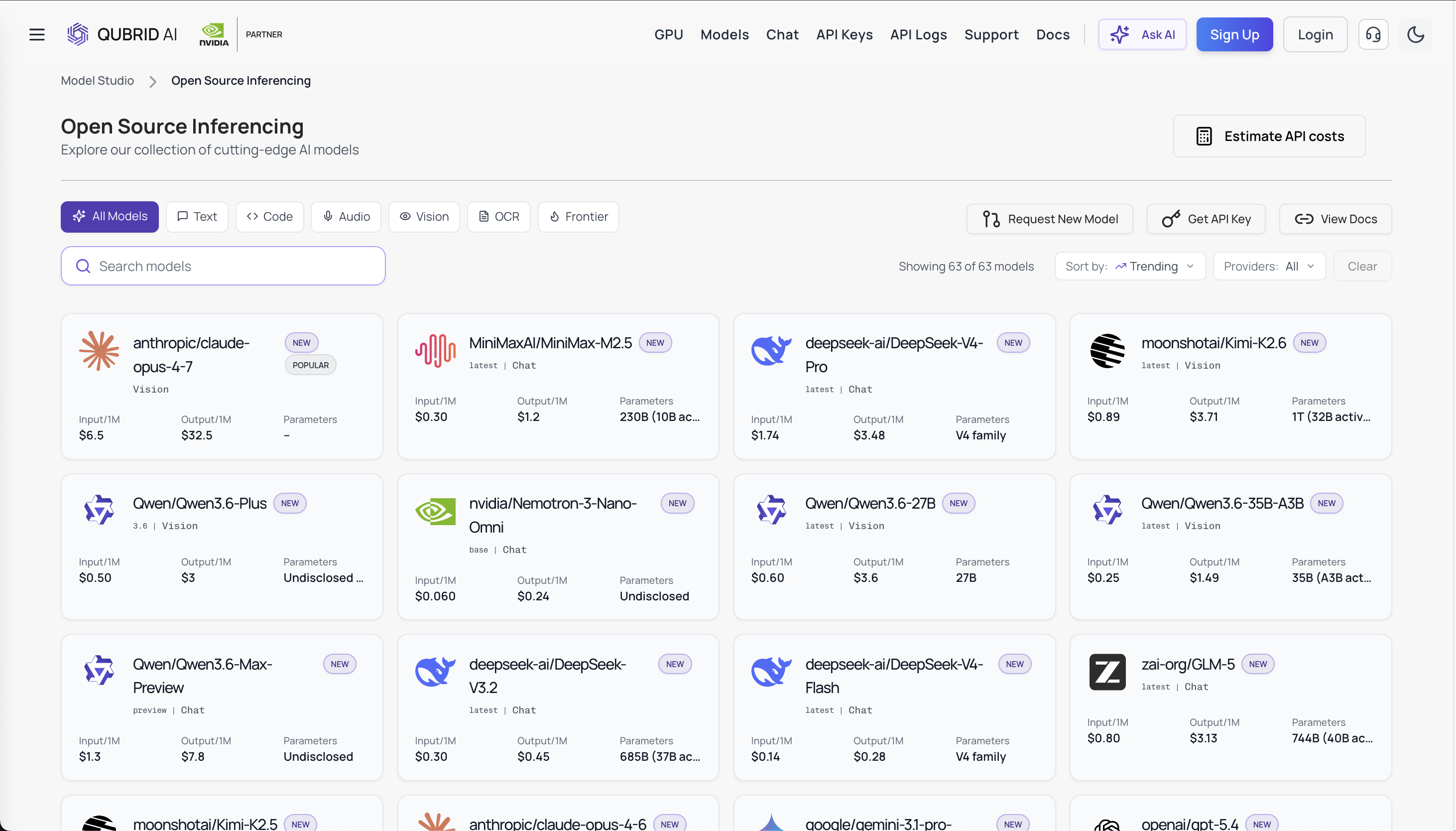

Using the Kimi K2.6 API Through Qubrid AI

Instead of treating Kimi K2.6 as a standalone dependency, a more scalable approach is to access it through a unified platform like Qubrid AI. This changes the role of the API entirely.

With Qubrid AI, K2.6 becomes part of a broader multi-model system rather than a single point of reliance. You can call it when you need deep reasoning and long-context analysis, switch to a faster or cheaper model for lighter workloads, and manage everything through a single OpenAI-compatible endpoint without rewriting your integration every time your requirements shift.

One of the biggest advantages of accessing Kimi K2.6 through Qubrid is how straightforward the integration actually is. Whether you're building in Python, Node.js, Go, or using cURL, the experience is consistent and familiar. If you've worked with the OpenAI SDK before, you can start using K2.6 on Qubrid with a single base URL change and a model name swap.

No custom SDK. No provider-specific quirks. No steep learning curve.

When Kimi K2.6 Actually Makes Sense

Kimi K2.6 is not a one-size-fits-all model, but in the right scenarios, it's exceptionally effective.

It works best for:

Complex multi-step coding and debugging tasks

Long-context document analysis (up to 256K tokens)

Agentic workflows requiring sustained multi-step reasoning

Backend automation where latency is secondary to accuracy

Multimodal inputs where text, image, and video need to be processed together

In these situations, the additional cost of the thinking mode is regularly justified by the quality of results.

For simpler, high-volume tasks, retrieval, formatting, short-form responses, instant mode, or a lighter model is a better fit. The goal isn't to maximize K2.6 usage. It's to use it where it genuinely adds value.

Try Kimi K2.6 on Qubrid AI here: https://www.qubrid.com/models/kimi-k2.6

A More Practical Way to Think About LLM APIs

One of the most common mistakes in production AI systems is treating model selection as a one-time, all-or-nothing decision. The most efficient systems today are built around dynamic model usage routing requests to the right model based on task type, latency requirements, and cost targets.

For example, you might use Kimi K2.6 in thinking mode for complex reasoning pipelines where accuracy is critical, instant mode for interactive user-facing features, and a lighter model entirely for high-volume, low-complexity requests. Managing that manually across separate APIs quickly becomes messy. This is exactly why unified inference platforms are gaining adoption.

The Kimi K2.6 API, used in isolation, is powerful. Used within a system that supports routing, fallback handling, and multi-model orchestration, it becomes significantly more valuable and significantly more cost-efficient.

Three Simple Ways to Get Started on Qubrid AI

Qubrid doesn't lock you into a single way of working. Depending on your use case, you have three distinct paths to access and deploy Kimi K2.6.

API Access Fastest Way to Integrate: Generate an API key at platform.qubrid.com and start making requests immediately using OpenAI-compatible endpoints. This is the right starting point for developers building applications, backend services, or AI-powered features that need to scale. The model name is moonshotai/Kimi-K2.6 , and the base URL is https://platform.qubrid.com/v1. That's genuinely all you need to change in an existing OpenAI integration.

Dedicated GPU Deployment with More Control and Performance: For teams that need isolated environments, consistent throughput, or more control over inference configuration, Qubrid supports dedicated GPU VM deployments starting at around $1.25 per GPU per hour. This is practical for production workloads where performance consistency matters more than pure cost minimization.

Playground Best for Testing and Iteration Before writing any integration code, the Qubrid Playground lets you test prompts, compare instant vs. thinking mode responses, and calibrate token usage interactively. For a model like K2.6, where prompt structure and mode selection can significantly impact both output quality and cost, starting in the playground saves time and avoids surprises later.

The full workflow looks like this:

Test in Playground → Integrate via API → Scale via dedicated infrastructure

And because everything runs through OpenAI-compatible endpoints, switching between models or between providers doesn't require reworking your stack.

Final Thoughts

Kimi K2.6 is one of the most capable open-weight models available right now, and the Qubrid AI platform makes it accessible without the usual infrastructure overhead. But like any powerful model, it rewards thoughtful usage.

The trade-offs are real:

Strong reasoning capability (competitive with frontier models on coding and agent tasks)

High output verbosity in thinking mode

Cost scales with how you use it, not just the base per-token rate

Latency varies significantly between instant and thinking mode

These aren't reasons to avoid Kimi K2.6. There are reasons to use it strategically.

Accessing K2.6 through Qubrid AI gives you the flexibility to do exactly that, calling the model selectively for tasks where its depth is justified, routing to faster alternatives when it isn't, and managing everything through a single unified API without increasing stack complexity.

Try Kimi K2.6 on Qubrid AI here: https://www.qubrid.com/models/kimi-k2.6

In a space where both expectations and inference costs are rising, that kind of flexibility is what separates a working proof-of-concept from a production-ready AI system.