Ultimate Guide to MiniMax-M2.1: Building Agent-Ready AI Applications with Qubrid AI

One of the latest models gaining attention among developers is MiniMax-M2.1, released by MiniMax AI. Built with a Mixture-of-Experts architecture, the model is designed for software engineering tasks, long-context reasoning, and AI agent development.

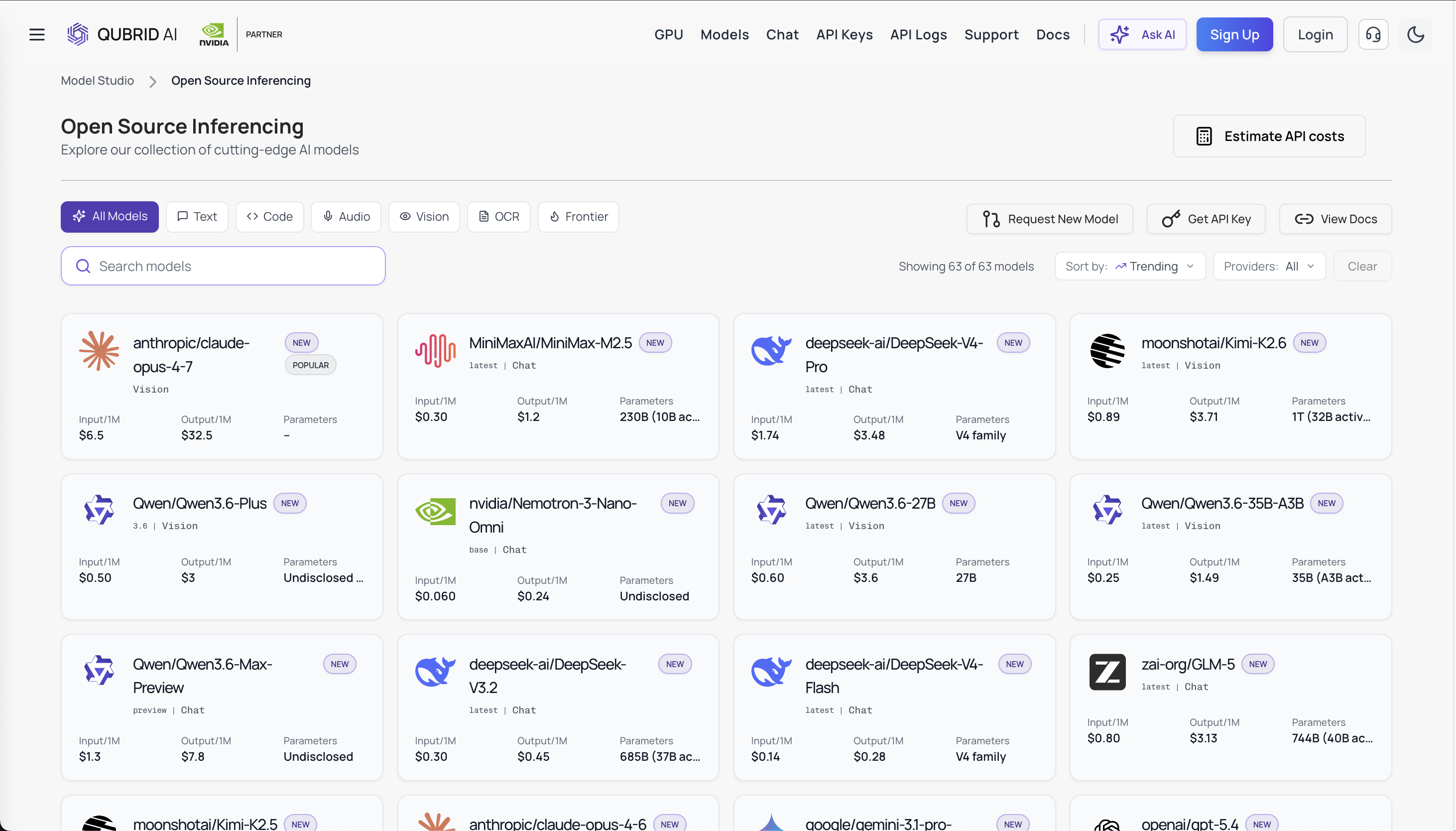

Platforms like Qubrid AI really help developers play around with models like MiniMax-M2.1 without the hassle of setting up complicated GPU setups.

In this article, we’ll explore what MiniMax-M2.1 is, how its architecture works, and how it performs on key benchmarks. We’ll also show how to test the model in the Qubrid AI playground and integrate it into applications using APIs.

What is MiniMax-M2.1?

MiniMax-M2.1 is a Mixture-of-Experts (MoE) large language model optimized for coding, reasoning, and autonomous agent workflows.

Key characteristics include:

Feature | Specification |

|---|---|

Total parameters | ~230B |

Active parameters per token | ~10B |

Architecture | Mixture-of-Experts |

Focus | Coding, reasoning, agents |

Context window | Long-context support |

Unlike traditional dense transformer models where every parameter participates in inference, MiniMax-M2.1 activates only a subset of expert networks for each token. This approach significantly reduces compute requirements while maintaining high performance.

The model is particularly well suited for building AI coding assistants, software engineering agents, DevOps automation tools, and applications that require reasoning over large amounts of context.

👉 Try MiniMax-M2.1 on the Qubrid AI Playground: https://qubrid.com/models/minimax-m2.1

Understanding the Mixture-of-Experts Architecture

MiniMax-M2.1 uses a sparse Mixture-of-Experts architecture, which improves efficiency when scaling large models. Instead of passing tokens through every layer of a dense model, a router network selects specialized experts that process each token.

Simplified MoE workflow

Input Prompt

│

Routing Network

│

Top-K Expert Selection

│

Expert Networks

│

Combined Output

│

Generated Token

Advantages of MoE

Efficiency: Only a small portion of the model's parameters are active during inference.

Scalability: Models can grow much larger without proportionally increasing compute costs.

Specialization: Different experts can specialize in tasks like coding, reasoning, or language understanding.

Because of this design, MiniMax-M2.1 can maintain strong performance despite having hundreds of billions of parameters.

Benchmark Performance

MiniMax-M2.1 demonstrates strong performance across benchmarks designed to evaluate real-world software engineering and application generation tasks.

These benchmarks focus on the ability to build applications, fix GitHub issues, and work across different programming environments.

VIBE Benchmark (Application Development)

The MiniMax team introduced VIBE (Visual & Interactive Benchmark Environment) to evaluate a model’s ability to generate functional applications and UI components.

Unlike traditional benchmarks, VIBE uses an Agent-as-a-Verifier (AaaV) framework that automatically evaluates whether generated applications run successfully.

MiniMax-M2.1 achieved the following results:

Benchmark | Score |

|---|---|

VIBE (Average) | 88.6 |

VIBE-Web | 91.5 |

VIBE-Simulation | 87.1 |

VIBE-Android | 89.7 |

VIBE-iOS | 88.0 |

VIBE-Backend | 86.7 |

These scores demonstrate the model’s ability to generate full-stack applications including UI, backend services, and interactive components.

Software Engineering Benchmarks

MiniMax-M2.1 also performs strongly on software engineering benchmarks that evaluate real development workflows.

Benchmark | Score |

|---|---|

SWE-bench Verified | 74.0 |

Multi-SWE-bench | 49.4 |

SWE-bench Multilingual | 72.5 |

Terminal-bench 2.0 | 47.9 |

These benchmarks evaluate whether a model can fix real GitHub issues, generate working code patches, understand multi-file repositories and operate across multiple programming languages.

Strong performance in these benchmarks suggests that MiniMax-M2.1 is well suited for AI-assisted software development workflows.

Why MiniMax-M2.1 is Designed for AI Agents

MiniMax-M2.1 is optimized for multi-step reasoning and tool-driven workflows, making it a strong candidate for AI agent systems.

Typical agent pipeline:

User Request

│

Task Planning

│

Tool Invocation

│

Code Generation

│

Execution

│

Validation

│

Iterative Improvement

Such pipelines are used in autonomous coding systems, AI developer assistants and automated DevOps tools. The combination of strong coding ability and long context makes MiniMax-M2.1 ideal for these scenarios.

Exploring MiniMax-M2.1 on Qubrid AI

Developers can explore and experiment with MiniMax-M2.1 through Qubrid AI, which provides a unified environment for working with multiple AI models.

The platform offers:

interactive model playground

API access for developers

experimentation with multiple models

simplified infrastructure management

This allows developers to quickly evaluate models and build AI applications.

Testing MiniMax-M2.1 in the Qubrid AI Playground

Before integrating a model into production, it is useful to experiment with prompts in an interactive environment. The Qubrid AI Playground allows developers to test MiniMax-M2.1 directly.

Step 1: Open the Playground

Navigate to the Qubrid AI platform and open the Model Playground and select the model as MiniMax-M2.1.

Step 2: Configure Request Parameters

The playground allows you to configure generation parameters.

Parameter | Description |

|---|---|

model | Model identifier |

prompt | Input instruction |

max_tokens | Maximum response length |

temperature | Controls randomness |

Example prompt: "Build a FastAPI backend for a task management system with authentication and CRUD operations."

The model can generate backend code including API endpoints, authentication logic and database models.

Step 3: Iterate and Optimize Prompts

The playground enables rapid iteration. Developers can refine prompts, adjust parameters and test different instructions. This helps identify the best prompts before integrating them into production systems.

Integrating MiniMax-M2.1 Using the Qubrid AI API

Once prompts are validated in the playground, developers can integrate the model into applications using the API provided by Qubrid AI.

This allows MiniMax-M2.1 to be used in applications like developer assistants, automation tools, AI agents, and software engineering platforms.

Example Python API Request

Below is a simple Python example demonstrating how to send a request to MiniMax-M2.1.

from openai import OpenAI

# Initialize the OpenAI client with Qubrid base URL

client = OpenAI(

base_url="https://platform.qubrid.com/v1",

api_key="QUBRID_API_KEY",

)

stream = client.chat.completions.create(

model="MiniMaxAI/MiniMax-M2.1",

messages=[

{

"role": "user",

"content": "Explain quantum computing in simple terms"

}

],

max_tokens=8192,

temperature=1,

top_p=0.95,

stream=True

)

for chunk in stream:

if chunk.choices and chunk.choices[0].delta.content:

print(chunk.choices[0].delta.content, end="", flush=True)

print("\n")

Example API Request Using cURL

Developers can also test the API directly from the command line. This returns the generated response in JSON format.

curl -X POST "https://platform.qubrid.com/v1/chat/completions" \

-H "Authorization: Bearer QUBRID_API_KEY" \

-H "Content-Type: application/json" \

-d '{

"model": "MiniMaxAI/MiniMax-M2.1",

"messages": [

{

"role": "user",

"content": "Explain quantum computing in simple terms"

}

],

"temperature": 1,

"max_tokens": 8192,

"stream": true,

"top_p": 0.95

}'

Why Platforms Like Qubrid AI Matter

Deploying large language models often requires specialized infrastructure and expertise. Platforms like Qubrid AI make the process easier by offering centralized access to models, a playground for experimentation, scalable APIs, and the ability to work with multiple models in one place.

This allows developers to focus on building AI applications instead of managing infrastructure.

👉 Explore other Qubrid models on platform: https://qubrid.com/models

Our Thoughts

MiniMax-M2.1 represents a new generation of language models optimized for real-world developer workflows. With its mixture-of-experts architecture, strong coding performance, and ability to handle long contexts, the model is well suited for building AI coding assistants, autonomous developer agents, and intelligent automation systems.

By making advanced models accessible through platforms like Qubrid AI, developers can rapidly prototype and deploy AI-powered applications without complex infrastructure. As AI agent ecosystems continue to evolve, models like MiniMax-M2.1 will likely play an important role in shaping the future of AI-driven software development. 🚀

👉 Try MiniMax-M2.1 on the Qubrid AI Playground: https://qubrid.com/models/minimax-m2.1

👉 See complete tutorial on how to work with the MiniMax-M2.1 model: https://youtu.be/8D1hrr4pv5M?si=XW7iC5u22qNsgAl1