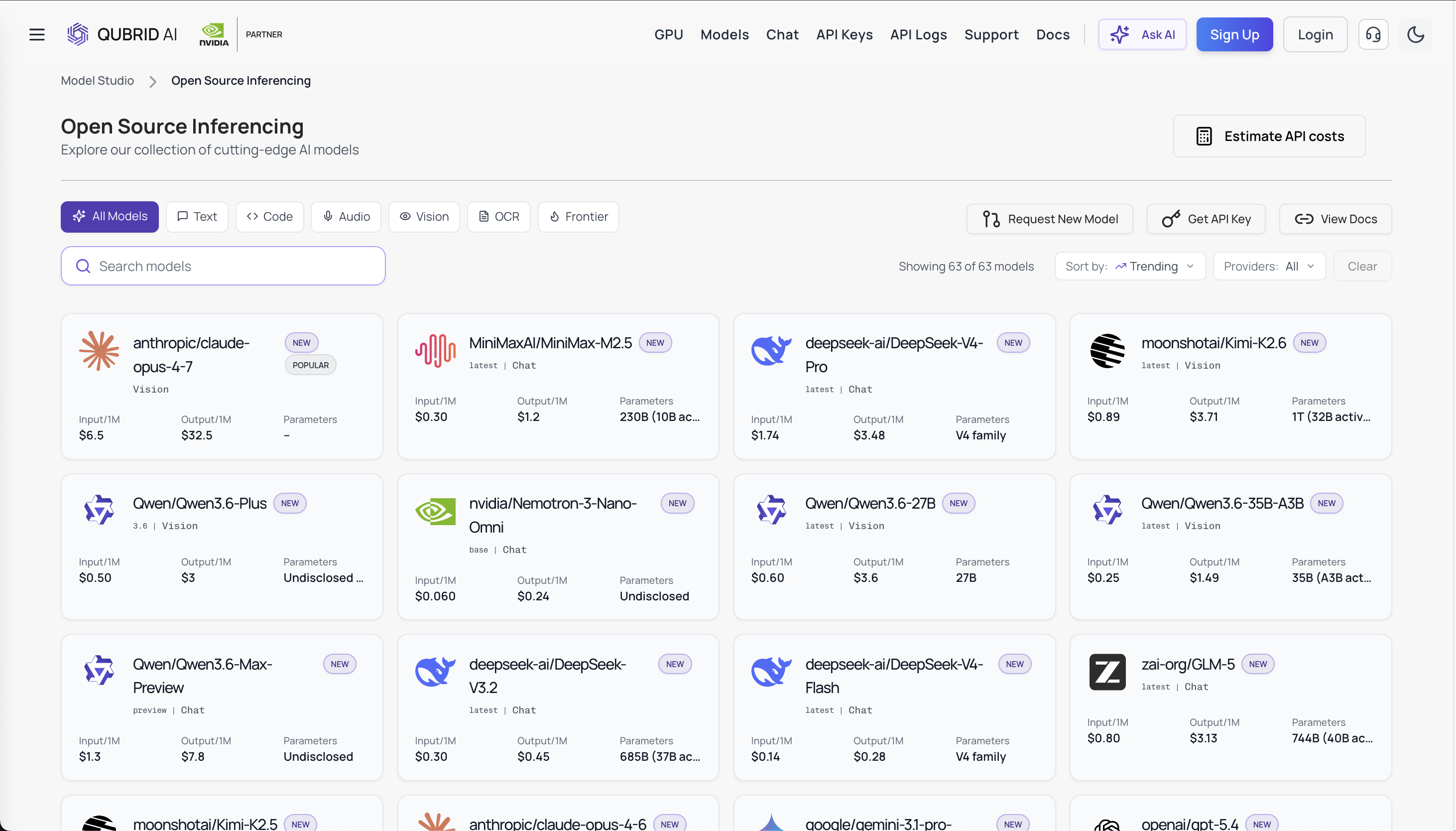

GLM-5.1 vs Qwen 3.6 Plus: The Next Generation of Enterprise AI on Qubrid

The landscape of enterprise large language models continues to evolve at an unprecedented pace. With Qwen 3.6 Plus already live on Qubrid AI and GLM-5.1 on the horizon, developers and enterprises face an important decision: which model is right for their workloads?

👉 Try Qwen 3.6 Plus here: https://platform.qubrid.com/playground?model=qwen3.6-plus

This isn't just another benchmark comparison. We're diving into the architectural foundations, real-world performance characteristics, and strategic positioning of both models to help you understand where each excels and why Qubrid AI is the optimal platform for deploying both at scale.

Understanding the Players

Qwen 3.6 Plus is production-ready today on Qubrid AI. It represents the state of the art in instruction-following, reasoning, and multimodal capabilities. Since going live on Qubrid, it's already proven itself in demanding enterprise workloads, not in preview, not behind gated access, but performing reliably in production from day one.

GLM-5.1, developed by Z.ai, is coming soon to Qubrid. Building on the success of earlier GLM models, GLM-5.1 introduces a new generation of capabilities focused on agentic behavior, advanced reasoning, and developer-centric workflows. Early indicators suggest it will push the boundaries of what's possible in specialized reasoning tasks.

The key question isn't which is universally "better" it's understanding where each model's strengths align with your specific needs.

Side-by-Side Comparison

Aspect | GLM-5.1 | Qwen 3.6 Plus |

|---|---|---|

Status | Coming Soon to Qubrid | Live & Production-Ready |

Architecture | 744B MoE (40B active) | Dense Transformer (Optimized) |

Context Window | 200K tokens | Extended (production-optimized) |

Primary Focus | Agentic Engineering & Coding | General Purpose & Multimodal |

Max Execution | 8-hour autonomous tasks | Multi-turn conversations |

SWE-Bench Pro | 58.4 (SOTA) | Competitive on real-world tasks |

SWE-Bench Verified | 77.8% | Strong general performance |

AIME 2025 | ~92-95% | Competitive reasoning |

NL2Repo | 42.7 (Top ranking) | General repository understanding |

Terminal-Bench 2.0 | 69.0 | Strong tool interaction |

MCP-Atlas | 71.8 (Leads field) | Strong protocol support |

Multimodal | Text-focused | Text + Image |

Sustained Work | 600+ iterations over 8 hours | Consistent per-turn quality |

Cost per 1M Input Tokens | $1.40 | Qubrid optimized pricing |

Cost per 1M Output Tokens | $4.40 | Qubrid optimized pricing |

Throughput | 70.4 tokens/sec | Optimized for enterprise scale |

Open-Source | Yes (HuggingFace MIT) | Available via Qubrid |

Training Hardware | Huawei Ascend (No Nvidia) |

Architecture & Operational Efficiency

Both models represent a departure from traditional monolithic architectures, but they approach scaling differently.

Qwen 3.6 Plus employs an optimized dense transformer architecture refined through extensive training on multimodal data. This approach delivers consistent performance across diverse tasks while maintaining excellent inference efficiency. The model benefits from a massive instruction-tuned dataset, making it exceptionally good at understanding nuanced human intent across thousands of use cases.

GLM-5.1 is built on an enhanced Mixture-of-Experts (MoE) architecture that routes computational resources dynamically. Rather than activating every parameter for every token, MoE selectively engages specialized expert networks. This architectural choice delivers two major advantages:

Efficient scaling - Large model capacity without proportional inference costs

Expert specialization - Different experts develop expertise in distinct domains

For enterprises deploying at scale, this distinction matters. MoE architectures reduce per-token computational overhead, translating directly to lower infrastructure costs when running millions of inferences monthly.

Performance Across Critical Benchmarks

Let's talk numbers. Here's where the models differentiate themselves:

Qwen 3.6 Plus excels in:

Multi-turn conversation and context retention

Instruction following and alignment (MMLU, MATH benchmarks)

Real-world application tasks requiring broad knowledge

Multimodal understanding (text + image reasoning)

Long-context processing with maintained coherence

Early telemetry from Qubrid shows Qwen 3.6 Plus achieving strong performance on enterprise-specific benchmarks, customer support automation, documentation understanding, and knowledge extraction tasks.

GLM-5.1 targets different specializations:

Advanced mathematical reasoning (AIME 2025: 95.7)

Complex coding tasks (LiveCodeBench v6: 84.9)

Agentic workflows and multi-step planning

Tool usage and terminal interaction (Terminal Bench 2.0: 41.0)

Long-horizon decision making

The pattern is clear: Qwen 3.6 Plus is your generalist powerhouse, while GLM-5.1 is engineered for specialist domains, particularly technical and reasoning-intensive workloads.

Real-World Application Profiles

When Qwen 3.6 Plus Wins

Qwen shines in enterprise scenarios requiring broad applicability:

Customer Service Automation - Understanding diverse queries across product categories, handling multi-turn conversations with memory

Content Generation - Creating product descriptions, marketing copy, and social media content with strong instruction adherence

Knowledge Extraction - RAG pipelines processing diverse documents, maintaining context across retrieval chains

Multimodal Analysis - Understanding customer screenshots, diagrams, and visual content alongside text

Internal Documentation - Answering employee questions about policies, procedures, and institutional knowledge

The beauty of Qwen 3.6 Plus in production is its reliability across undefined problem spaces. You throw varied tasks at it, and it performs predictably.

When GLM-5.1 Wins

GLM-5.1's architecture and training focus on scenarios demanding deeper reasoning:

Software Development Assistance - Agentic code generation, repository-wide refactoring, bug analysis across multiple files

Mathematical Problem Solving - From high school competition math to academic research problem formulation

Scientific Reasoning - Hypothesis generation, experimental design, data interpretation

Complex Workflow Orchestration - Multi-step processes requiring tool integration, environment state management, and sequential decision-making

Advanced Data Analysis - Transforming raw data into insights through chains of analytical reasoning

GLM-5.1's MoE architecture activates only the experts relevant to each token, making it particularly efficient for these deep-reasoning workloads.

Deployment Considerations on Qubrid

Both models will be available on Qubrid's platform, and here's why that matters:

Qubrid AI abstracts away the infrastructure complexity. You get:

Instant API access - No setup hassle, start making requests immediately.

GPU optimization - Models run on optimal hardware for their architecture (GPUs provisioned for your specific throughput requirements)

Cost transparency - Pay for what you use, with clear per-token pricing

Production reliability - Built-in monitoring, rate limiting, and fallback strategies

Context window flexibility - Both models are available with extended context for handling larger documents and complex prompts

For enterprises, this eliminates the capital expenditure and operational overhead of self-hosting. You're accessing cutting-edge models with the scalability and reliability of a purpose-built platform.

The Inference Cost Factor

This is where MoE architecture decisions compound real-world impact.

Qwen 3.6 Plus requires loading substantially more parameters per token due to its dense architecture. For organizations running continuous inference workloads (customer support, content generation, monitoring systems), this means higher per-token costs at scale.

GLM-5.1's MoE design selectively activates experts. In practical terms, a reasoning-heavy task might activate 30% of available parameters, while a simpler task activates 15%. This translates to meaningfully lower costs per million tokens processed over time.

For a mid-size company running 10 million tokens daily across their platform, this difference compounds to significant monthly savings. On Qubrid, this cost advantage passes directly to you.

Which Model Should You Choose?

Choose Qwen 3.6 Plus if you need:

Production-ready reliability right now

Versatility across diverse task types

Multimodal capabilities (text + image understanding)

Strong instruction-following in ambiguous scenarios

A model already proven in enterprise deployments

Choose GLM-5.1 when you prioritize:

Maximum performance on reasoning-intensive tasks

Lower inference costs at massive scale

Agentic workflows and tool-use scenarios

Specialized domain performance (math, code, science)

Efficiency in computational resource allocation

The Hybrid Approach

Here's what smart enterprises are doing: deploying both.

Route requests to Qwen 3.6 Plus for general-purpose tasks, conversation, and content creation. Use GLM-5.1 for specialized workloads, your software engineering support, research assistance, and complex analytical tasks.

This hybrid approach maximizes performance-per-dollar, ensuring you're never overpaying for general-purpose capability on tasks that would be better served by a specialized model.

On Qubrid's unified platform, switching between models is frictionless. Same API, same authentication, same monitoring infrastructure.

Looking Forward

Qwen 3.6 Plus demonstrates that dense architectures remain formidable for real-world enterprise tasks. It's proof that breadth and generalization still matter deeply.

GLM-5.1's architecture signals the industry's evolving optimization focus: not bigger models, but smarter allocation of parameter capacity. MoE and similar routing mechanisms will likely become standard in high-performance LLMs.

The future of enterprise AI isn't about picking a single "best" model. It's about having access to complementary models optimized for different purposes, deployed on infrastructure that makes switching between them trivial.

Get Started Today

Qwen 3.6 Plus is live now on Qubrid AI.

👉 Try Qwen 3.6 Plus here: https://platform.qubrid.com/playground?model=qwen3.6-plus

GLM-5.1 coming soon. We'll announce the exact availability date on our blog and developer documentation.

Want hands-on experience? Try both models in the Qubrid Playground, with free tokens included on your first top-up.

👉 Try all models here and start building: https://platform.qubrid.com/models