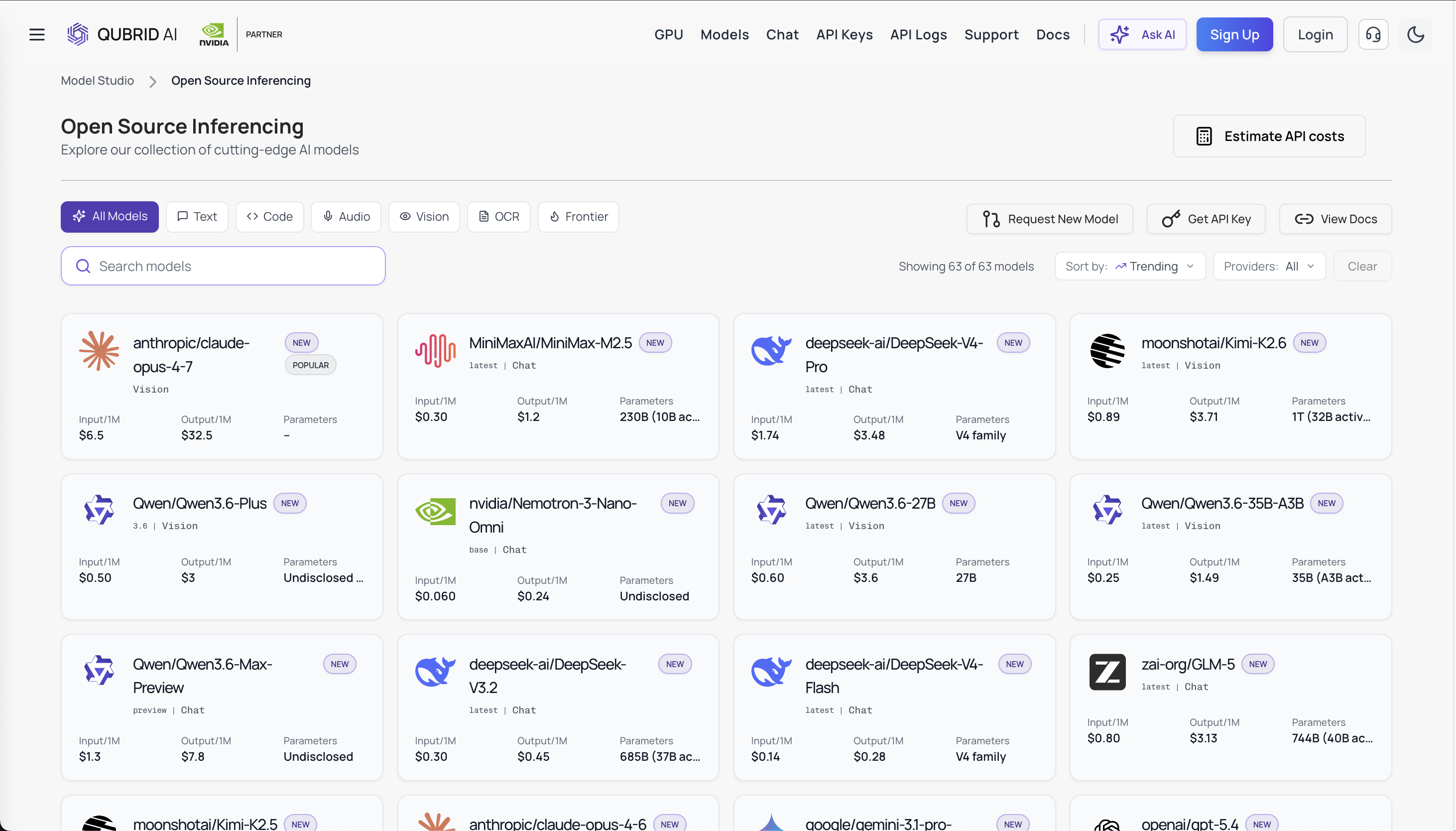

Qwen 3.6 Plus vs Gemma 4 vs Claude Opus 4.6: Choose Your Model on Qubrid AI in 2026

By April 2026, developers face an unprecedented choice: three heavyweight LLMs with fundamentally different philosophies. The problem? Picking the right one for your project is hard. That's why Qubrid AI lets you test all three directly on our platform side-by-side, with real metrics, against your actual workload. Here's how to choose.

The Three Models (And Why It Matters)

Qwen 3.6 Plus is the cost leader. Alibaba's hybrid thinking mode lets you toggle between fast responses and extended reasoning. Open-weights at scale. Multilingual (119 languages). Perfect for high-volume pipelines where cost compounds.

👉 You can try Qwen 3.6 Plus on Qubrid AI right now: https://platform.qubrid.com/playground?model=qwen3.6-plus

Gemma 4 is the open-source powerhouse. Google's first open-weight mixture-of-experts model. True multimodal (vision, audio, video coming). Apache 2.0 licensed. Built for teams that want zero licensing friction and full deployment control.

Claude Opus 4.6 is the reliability champion. Premium, but uncompromising instruction-following. Built for long agentic chains where hallucinations are catastrophic. The default for production autonomous systems.

👉 You can try Claude Opus 4.6 on Qubrid AI right now:

https://platform.qubrid.com/playground?model=anthropic-claude-opus-4-6

Benchmarks: What the Data Shows

Coding Performance

SWE-bench (real GitHub issues):

Claude: Strongest. Explicitly trained on agentic scenarios.

Qwen: Very competitive with thinking mode. Fewer hallucinations.

Gemma: Solid for open-weight. Viable for most tasks.

HumanEval/MBPP: All three are near-ceiling. Qwen excels at multilingual code. Claude produces cleaner, well-documented output. Gemma impresses with its accessibility.

The reality: Claude edges ahead on hard tasks, but Qwen, with thinking mode, closes the gap significantly. Gemma is credible if you weigh cost and control higher than marginal performance.

Model Architecture Decoded

Qwen 3.6 Plus: Dense transformer with hybrid thinking. Fast mode for simple queries. Extended reasoning mode for hard problems. You choose per task. Massive multilingual training (119 languages), strong in math and code.

Gemma 4: Mixture-of-experts at scale. 26B variant activates ~9B params at inference capability density of much larger models, without the hardware cost. Native multimodal. 128K–256K context. Apache 2.0.

Claude Opus 4.6: Purpose-built for agents. Long tool-calling chains. 200K context with consistent quality (no mid-context degradation). Trains on agentic failure modes. Instruction-following even under pressure.

Context Windows: What You Can Hold

Model | Native | Quality |

|---|---|---|

Qwen 3.6 Plus | 128K | Stable; extends to 1M with degradation |

Gemma 4 | 128K–256K | Stable throughout |

Claude Opus 4.6 | 200K | Consistently high, no degradation |

For agentic workflows holding code files, test logs, and history (typical: 50–200K tokens), Claude's consistent 200K beats extended windows with degradation. Reliability > raw size.

Multimodal: Which Model Sees Best?

Claude: Strong vision. Handles screenshots + code context coherently.

Qwen: Solid vision support. General image understanding works. Less emphasized.

Gemma: True multimodal. Vision, audio, video-ready. Native to architecture.

Winner: Gemma 4 if multimodal is core to your workflow.

Agentic Tool Use: Where It Matters Most

Claude Opus 4.6: Meticulous. Rarely hallucinated arguments. Recovers from tool failures. Maintains coherence over 20+ calls. This is why it's production default.

Qwen 3.6 Plus: Thinking mode before tool calls reduces errors. Latency trade-off: you wait longer for higher accuracy.

Gemma 4: Solid function calling. Good for most use cases. Claude's advantage shows on mission-critical loops.

Cost: Where Economics Diverge

Model | Cost/1M Tokens | Scaling |

|---|---|---|

Qwen 3.6 Plus | $1–3 | Advantage compounds at volume |

Gemma 4 | $0 (self-hosted) | Best for enterprise with hardware |

Claude Opus 4.6 | $15–25 | Premium, justified by reliability |

At 10M tokens/month: Qwen costs $10–30, Claude costs $150–250. At scale, this compounds to $10k+/month differences.

What Developers Actually Report

Claude users: Consistent output. Reliable tool use. Long agent loops that don't drift. Peace of mind.

Qwen users: Impressive performance at 1/5 the cost. Thinking mode genuinely useful. Multilingual strength. Occasional edge cases.

Gemma users: Surprised by the capability. Full control over deployment. Multimodal potential. Great for custom architectures.

Making the Choice

Choose Claude Opus 4.6 if:

Reliability is non-negotiable (user-facing, safety-critical)

Running long, complex agent chains

Cost is secondary

Choose Qwen 3.6 Plus if:

Volume matters (1000s+ tasks/day)

Cost is a real constraint.

Multilingual or batch workflows

Occasional retries are acceptable.

Choose Gemma 4 if:

Multimodal is core (vision, audio, video)

Full deployment control needed (on-prem, edge)

Apache 2.0 licensing required

You have ML infrastructure.

The Smart Strategy: Use All Three on Qubrid AI

The best teams don't pick one model. They build a portfolio:

Claude Opus 4.6 → Critical paths (security fixes, user-facing decisions)

Qwen 3.6 Plus → High-volume, lower-stakes work (batch code gen, testing)

Gemma 4 → Self-hosted, multimodal, privacy-critical tasks

Test on Qubrid AI Today

👉 You can try Qwen 3.6 Plus on Qubrid AI right now: https://platform.qubrid.com/playground?model=qwen3.6-plus

👉 You can try Claude Opus 4.6 on Qubrid AI right now:

https://platform.qubrid.com/playground?model=anthropic-claude-opus-4-6

Run your actual workflow against all three:

See real latency, token usage, and cost.

Compare output quality side-by-side.

Trace tool calls and error recovery

Build your model portfolio.

The best model for your problem isn't determined by benchmarks. It's determined by testing it on your actual problem.

Take your free credits on your first top-up on Qubrid AI and find out which model wins for your use case.

👉 Try over here: https://platform.qubrid.com/models